Introduction

Over the past decade, enterprise investment in data infrastructure – spanning IoT ecosystems, cloud platforms, and advanced operational technologies – has fundamentally transformed the industrial landscape. Today, manufacturing systems generate continuous streams of real-time data from sensors, machines, and production lines at a scale that was previously unimaginable.

Yet as operational environments grow increasingly complex and data-rich, a more pressing question has moved to the forefront of digital transformation: how can organizations progress from passively observing their operations to actively optimizing them in real time?

Conventional tools – dashboards, periodic reporting, and isolated simulation models – have undoubtedly advanced operational visibility. However, they remain insufficient for enabling the kind of continuous, data-driven decision-making that modern industrial complexity demands. The gap between generating insight and taking action persists.

Closing that gap requires a fundamentally different approach to operations.

Forward-looking organizations are moving beyond reactive analysis toward an integrated model – one that connects live operational data with simulation, predictive intelligence, and continuous optimization. In this model, data is no longer a passive record of past events; it becomes an active input to every decision.

This shift is being driven by one of the most consequential concepts in industrial technology today. What exactly is a Digital Twin – and why is it becoming an indispensable layer in modern operational architecture?

Why It Matters?

The rising prominence of Digital Twin is not a product of technological enthusiasm – it reflects a structural shift in how industrial operations are managed and optimized.

The widespread adoption of Industry 4.0 technologies – IoT, artificial intelligence, and real-time analytics – has given organizations a level of operational visibility that was previously unattainable. Yet visibility, on its own, does not drive better outcomes. The capacity to observe is not the same as the capacity to act.

Most enterprise digital systems remain built on a fundamental linear logic: data is collected, analyzed, and reported. This model improves transparency, but it is reactive by design – it tells organizations what has already happened, not what they should do next.

Meanwhile, the operational environments these systems are meant to support are growing more volatile. Production floors must absorb supply chain disruptions, increasing performance demands, and rising process variability – all without compromising efficiency or reliability. The margin for delayed decisions is narrowing.

The result is a critical capability gap: the inability to continuously convert operational data into decisions. Digital Twin is designed to close that gap – not by improving how operations are visualized, but by transforming how they are running.

As defined by the Digital Twin Consortium, digital twins deliver actionable intelligence by integrating real-time data, simulation models, and domain expertise. It helps organizations to move beyond understanding current states toward continuously shaping what comes next.

This is the core proposition of Digital Twin at this moment in industrial history: a closed-loop operational system capable of matching the speed, complexity, and unpredictability of modern production environments.

A Deep Dive into Digital Twin

What is Digital Twin?

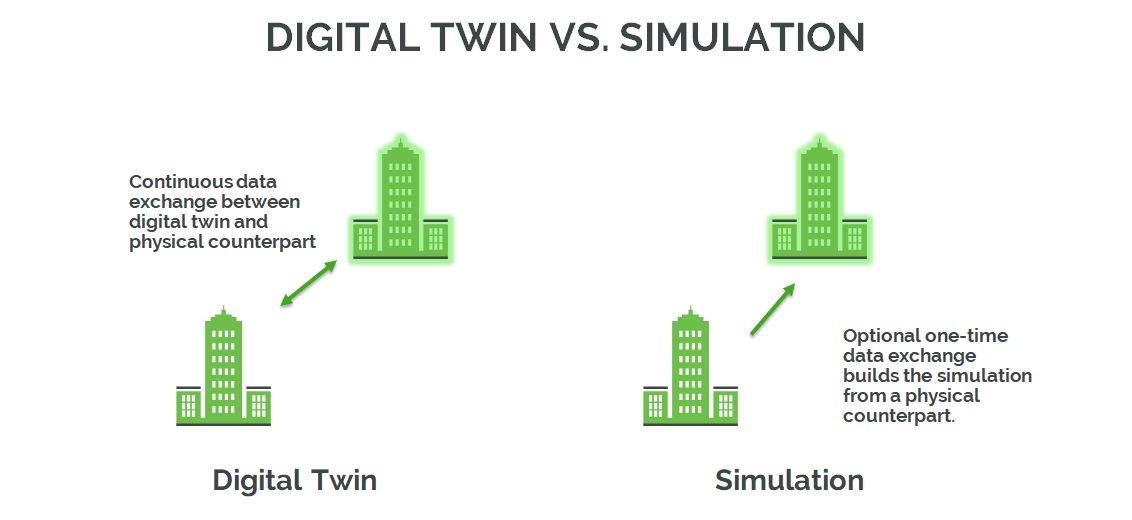

At its core, a Digital Twin is not a static digital replica – it is a continuously evolving system that mirrors a real-world asset, process, or operational environment with precision and persistence.

What distinguishes a Digital Twin from conventional models is the nature of its data relationship. Rather than a one-directional feed, it operates on a live, bidirectional exchange: sensor data, system events, and operational inputs continuously refresh the digital counterpart, while intelligence generated on the digital side flows back to shape physical performance.

This makes passive observation a starting point, not a destination. Organizations working with Digital Twins gain the operational capacity to:

- Track real-time conditions and performance across assets and systems

- Run scenario tests without touching live operations

- Anticipate outcomes from actual operating data – not assumptions

- Drive continuous process improvement grounded in real-world behavior

Crucially, a Digital Twin is not a fixed artifact. It matures alongside the physical system it represents – accumulating knowledge not just of how something was designed, but of how it performs under real operating conditions.

What Differentiates Digital Twin

Digital Twin is frequently conflated with technologies it superficially resembles. Understanding what sets it apart requires examining not just what each concept represents, but what it can, and cannot do.

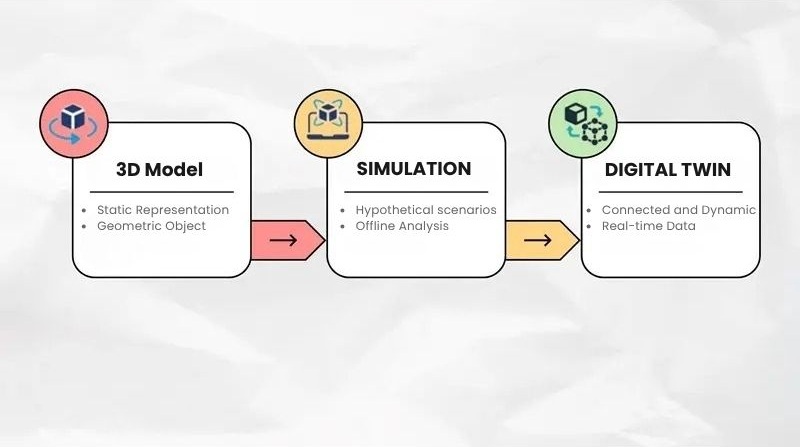

3D Models: Static Visualization

A 3D model is a geometric representation of an object or environment – capturing shape, dimensions, and visual structure at a fixed point in time. Its primary function is to answer one question: What does this look like?

This makes 3D models valuable for design, communication, and spatial planning. But they are inherently retrospective: the moment physical conditions change; the model no longer reflects reality – and remains static until someone manually intervenes.

A Digital Twin starts where a 3D model ends. By integrating real-time data, operational context, and analytical models, it transforms a geometric snapshot into a living system – one that reflects not just how an asset looks, but how it is behaving right now.

Simulation: Hypothetical and Isolated

Simulation is a powerful tool for testing hypotheses – it applies predefined variables to a model to project what might occur under specific conditions. By design, it is a one-directional exercise: a human constructs the scenario; the model responds, and the loop ends there.

This makes simulation well-suited for the design and planning phase, where the goal is to stress-test assumptions before committing a course of action. Its core question is: What could happen if…?

A Digital Twin operates on an entirely different premise. Rather than projecting hypothetical scenarios, it grounds every model in live operational data – reflecting what is happening across a specific system at any given moment. The result is decision-making anchored, not in assumptions.

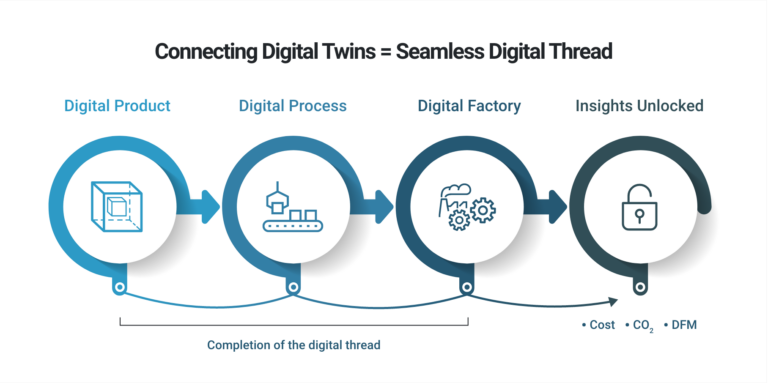

Digital Thread: Connectivity Without Intelligence

Digital Thread describes the structured flow of data across a product or system’s entire lifecycle – from initial design and engineering through manufacturing, deployment, and ongoing operation. Its function is to maintain data continuity, traceability, and consistency as information moves between systems and teams.

It is, in essence, an information architecture – not an intelligence layer. Digital Thread connects and preserves data, but it does not model behavior, generate predictions, or produce operational recommendations independently.

A Digital Twin can draw on this connected data infrastructure as its foundation – transforming a well-structured information flow into active operational intelligence.

Business Value – Where Digital Twin Creates Impact

The business case for Digital Twin is not theoretical; it is being validated across industries where operational complexity, real-time data demands, and the cost of failure are highest. In these environments, the shift from passive monitoring to active intelligence translates directly into measurable performance outcomes.

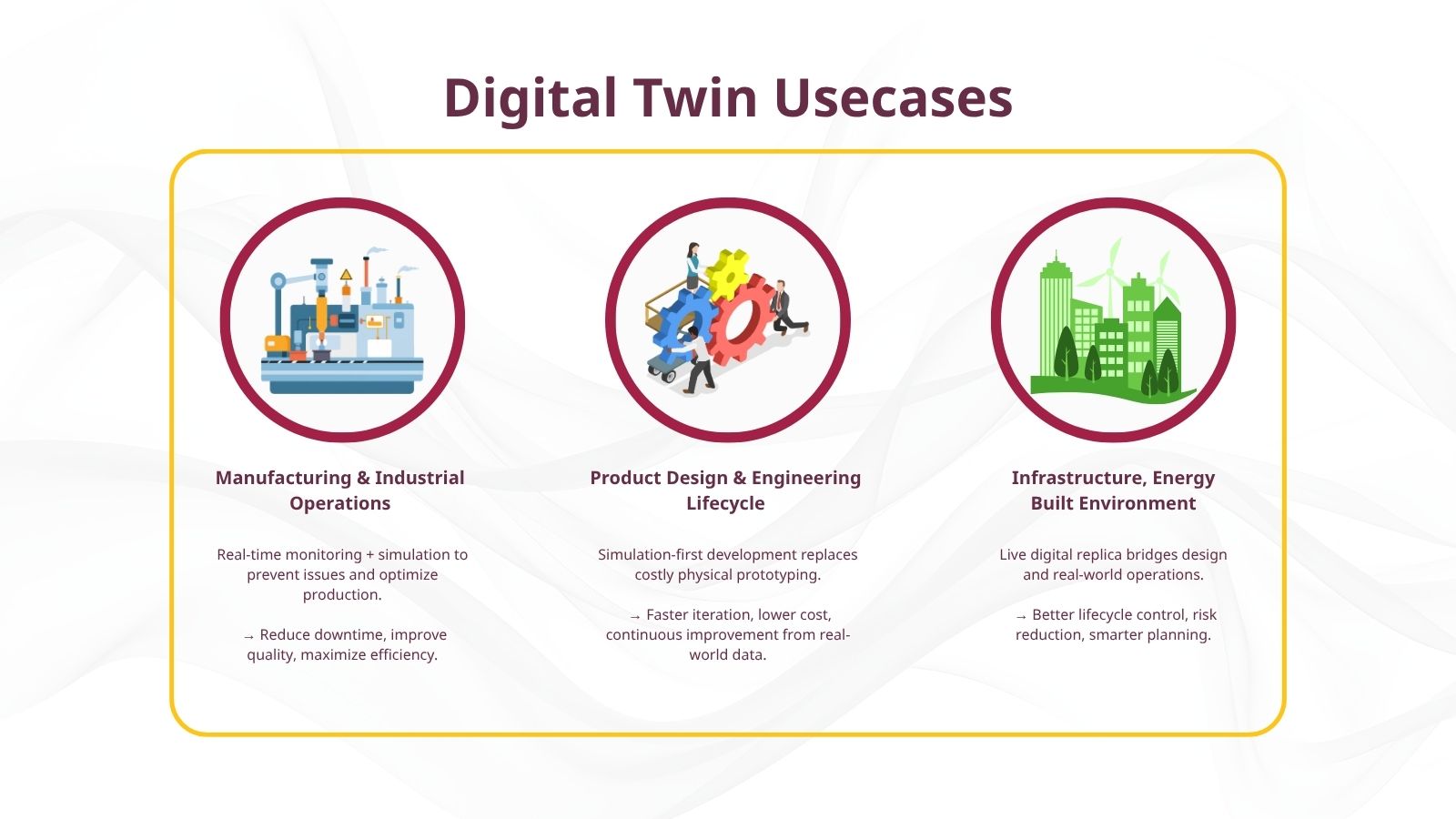

Manufacturing & Industrial Operations

Manufacturing is where the operational case for Digital Twin is perhaps most compelling. Production environments are defined by tight tolerances, interdependent systems, and the constant pressure to maintain throughput – conditions were delayed decisions to carry immediate financial consequences.

By maintaining a continuously updated digital counterpart of physical assets and processes, manufacturers gain the ability to identify issues before they escalate, test process changes without disrupting live operations, and drive performance improvements grounded in actual system behavior – not historical averages.

In practice, this translates across four core operational areas:

- Predictive maintenance: Early detection of equipment degradation, reducing unplanned downtime and extending asset lifespan

- Quality control: Real-time identification of process deviations before they propagate into defects

- Operational visibility: A unified, real-time view across machines, workflows, and performance metrics

- Process optimization: Continuous refinement of production workflows through simulation and data-driven iteration

A well-known example is Boeing, which reported up to a 40% improvement in the quality of airplane parts and systems after implementing Digital Twin technologies across its manufacturing operations (Aviation Tech Today).

Product Design & Engineering Lifecycle

In product development, Digital Twin reshapes the fundamental economics of bringing a product to the market. The traditional development model, including design, building a prototype, test and iterate, is resource-intensive by nature. Each physical prototype represents a significant investment of time and capital, and the feedback loop between design and validation remains slow.

Digital Twin compresses this cycle by moving the bulk of testing and validation into a virtual environment. Engineering teams can simulate real-world operating conditions – stress, thermal performance, load behavior – without touching a single physical component. Prototyping becomes a final confirmation step rather than the primary method of discovery.

The impact extends beyond speed. When products are deployed, usage data flows back into the digital model – creating a continuous feedback loop between what was designed and how it performs in the field. Development, in this sense, never fully ends; it evolves alongside the product itself.

The result is a shift from a linear, stage-gated development process to an iterative and intelligence-driven lifecycle – one where decisions at every phase are grounded in data rather than assumptions.

Infrastructure, Energy & Built Environment

Infrastructure-intensive industries – construction, energy, and the built environment – operate on long asset lifecycles, high capital exposure, and limited tolerance for operational failure. In these contexts, the gap between how an asset was planned and how it performs over time carries significant financial and safety consequences.

Where tools like BIM provide a static record of design intent, Digital Twin introduces a continuously updated representation that reflects real-world conditions as they evolve. Three areas concentrate most of the measurable value:

- Lifecycle management: Tracking performance from construction through operation, giving asset owners a single source of truth across the entire lifecycle.

- Planning and simulation: Testing design and operational decisions in a virtual environment before committing to physical changes – reducing uncertainty and rework.

- Risk mitigation: Identifying structural, operational, or safety risks before they materialize – rather than responding after the fact.

In active construction environments, this translates directly into project control: real-time site data is continuously compared against baseline plans, surfacing schedule, or cost deviations early enough to act. Integrated with IoT sensors and AI, the same infrastructure monitors hazardous conditions and workforce safety – shifting risk management from periodic inspection to continuous oversight.

Reality Layer – What It Takes to Make It Work

Building the Foundation: Data, Systems, and Integration

A Digital Twin that exists only as a model is, operationally speaking, incomplete. The architecture matters – but what separates a functioning Digital Twin from an advanced visualization exercise is whether the model is embedded in a real operational system, receiving live data and feeding intelligence back into decisions. This is precisely where many implementations stall: they produce a sophisticated model and stop there.

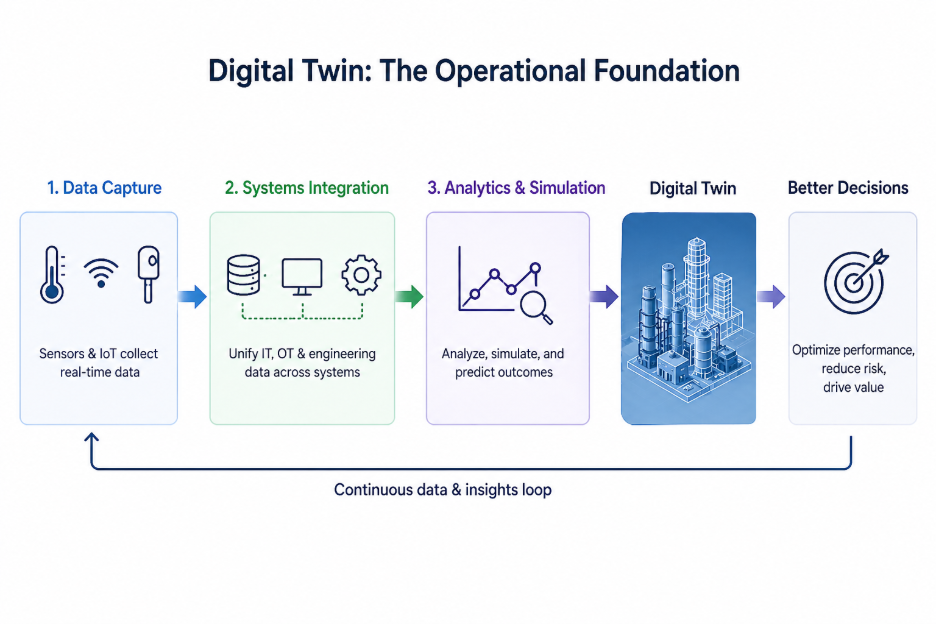

Making a Digital Twin operational requires three interconnected layers, each building on the one beneath it.

- Data capture: Sensors and IoT devices monitor physical conditions – temperature, pressure, motion, system performance – and transmit that data continuously through cloud or edge infrastructure. The digital model stays current only as long as this pipeline remains reliable.

- Systems integration: Industrial environments are structurally fragmented – data lives across IT, OT, and engineering systems that were rarely designed to communicate with one another. Integration is not a peripheral concern; it is what determines whether data becomes a decision asset or remains with siloed information. Without it, the model reflects only part of the operational reality.

- Analytics and simulation: With unified, real-time data as its input, this layer processes patterns, models of behavior, and evaluates future scenarios. It is what moves the system from describing current conditions to anticipating and shaping what comes next.

Together, these layers form the operational backbone of a Digital Twin. Remove any one of them, and the system degrades – from an active decision tool back into a passive model.

Activating the System: From Insight to Operations

Infrastructure is a prerequisite, not a differentiator. A Digital Twin backed by robust data pipelines and integrated systems still delivers no operational value if it remains separate from the workflows where decisions are made.

What activates the system is the feedback loop. Real-time monitoring surfaces what is happening across physical assets; simulation lets teams interrogate what could happen under different conditions; and the resulting intelligence must flow back into the physical environment – through operator decisions, automated controls, or both. It is this closed loop – from observation to insight to action – that distinguishes a functioning Digital Twin from a sophisticated dashboard.

Without that return path, the model accumulates insight that never reaches execution. And in operational terms, insight that does not change behavior has no value.

Equally important is the question of where to apply it. Digital Twin does not generate value by being deployed broadly – it generates value when it is precisely targeted. Reducing unplanned downtime, improving production yield, cutting energy waste: each of these represents a specific business objective that shapes how the system is configured, what data it prioritizes, and how its outputs are acted upon.

This is also why integration with existing operational systems – MES, ERP, process control infrastructure – is not optional. These are the systems through which decisions become actions. A Digital Twin that cannot communicate with them produces recommendations that go nowhere.

The Real Challenges – Operationalizing Digital Twin

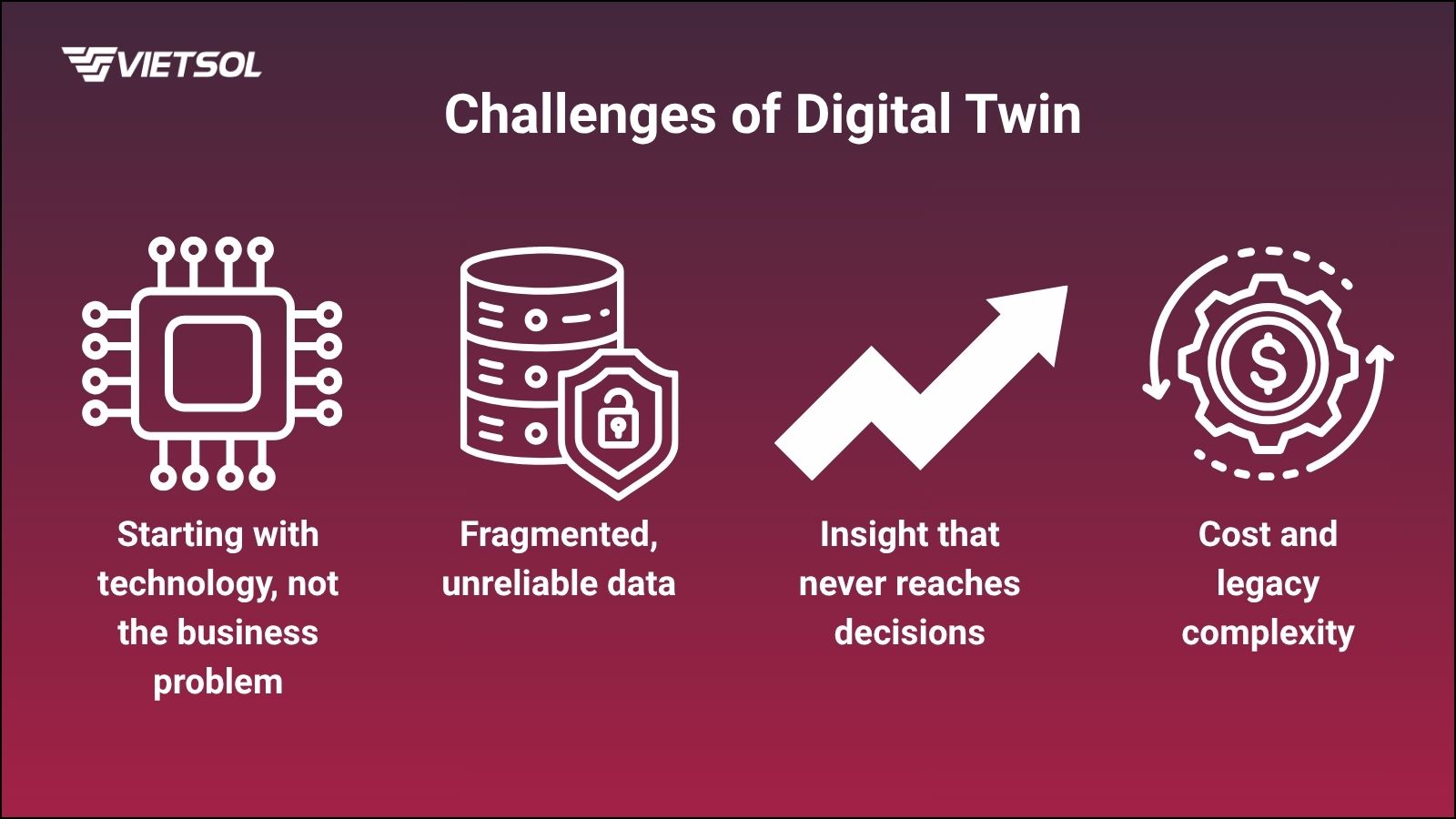

Growing adoption has not been translated uniformly into impact. Across industries, a significant share of Digital Twin initiatives falls short – and the failure point is rarely the technology. More often, it is an approach.

Four implementation challenges account for most of the gap between ambition and outcome:

- Starting with technology, not the business problem: Initiatives built around the model rather than a defined operational objective tend to produce sophisticated outputs that no one knows how to act on. Without a concrete use case, there is no standard against which the system’s value can be measured.

- Fragmented, unreliable data: A Digital Twin is only as accurate as the data feeding it. In most industrial environments, data is distributed across systems that were never designed to interoperate – making real-time connectivity, data quality, and consistency persistent obstacles rather than one-time setup challenges.

- Insight that never reaches decisions: Generating analytical output is not the same as influencing operations. When a Digital Twin sits outside the workflows where decisions are made, disconnected from MES, ERP, or control systems, it functions as a reporting layer, not an operational one.

- Cost and legacy complexity: The infrastructure, platform, and integration investment required to build and sustain a Digital Twin is substantial, and in environments running on legacy systems, both the technical debt and the organizational change required compound that cost significantly.

Conclusion

Digital Twin is frequently reduced to visualization or simulation capability, a more sophisticated way of seeing operations. That framing understates what it actually is. The more precise definition is operational: Digital Twin is a decision layer, not a display layer. Its function is not to render the system more visible, but to make the system more governable.

When implemented with that intent – continuously ingesting live data, modeling behavior, and feeding intelligence back into physical operations – it ceases to be an analytical add-on and becomes a core component of how the organization operates. The shift is from monitoring performance to actively shaping it.

Realizing that shift requires a disciplined sequence. The starting point is not technology selection; it is a clearly scoped operational problem with measurable consequences. From there, a reliable data and integration foundation determines whether the twin reflects reality or approximates it. And critically, the system must be wired into the workflows where decisions occur, not positioned as a parallel analytical environment that operators check periodically.

However, that foundation does not have to be built from scratch, and the choice of technology partner at this stage carries more weight than it might initially appear. As an authorized partner of Siemens Digital Industries Software in Vietnam, Vietsol brings to market a set of solutions that directly addresses the infrastructure layers a functioning Digital Twin requires: Simcenter for simulation and predictive testing, Insights Hub for industrial IoT and real-time asset intelligence, Polarion for lifecycle collaboration, and OpsCenter for manufacturing operations management.

Taken together, these tools cover the technical ground that separates a Digital Twin that models well from one that operates well – spanning product development, process simulation, operational monitoring, and continuous optimization. For organizations at the stage of turning intent into implementation, Vietsol offers a concrete and locally supported path to making that transition, let’s contact us today!

Frequently Asked Questions

1. How do digital twins support IoT applications?

Digital twins and IoT work together to turn raw data into real-time insight and action. While IoT devices collect data from the physical world, the digital twin uses that data to create a dynamic virtual model that reflects current conditions and behavior. This connection allows teams to monitor systems remotely, simulate “what-if” scenarios, and make informed decisions without interrupting physical operations. In manufacturing, for example, digital twins use IoT sensor data to predict equipment failures and optimize production. In smart cities, they simulate traffic flow and energy use based on live input from urban sensors.

By bridging the physical and digital worlds, digital twins give structure and meaning to IoT data – turning endless streams of information into clear insights that support predictive maintenance, performance optimization, and faster response to change.

2. Are there different types or levels of digital twins?

Yes, digital twins come in different types and levels, depending on what they represent and how advanced their capabilities are.

Types of digital twins (by scope):

- Component twins: Focus on a single part (e.g., a sensor or motor) and monitor its real-time performance.

- Asset twins: Represent complete assets (e.g., a vehicle or machine) made up of multiple components.

- System twins: Model how assets work together within a system (e.g., a production line or building HVAC).

- Process twins: Simulate entire workflows or operations (e.g., a supply chain or manufacturing process).

Levels of digital twins (by capability):

- Descriptive: Basic digital representation; mostly visual or static.

- Informative: Combines real-world data with the model to enable analysis.

- Predictive: Uses data and simulations to forecast future performance and issues.

- Prescriptive/Autonomous: Powered by AI, these twins can recommend or even take automated actions based on insights.

These types and levels can be combined or scaled depending on the use case – from monitoring a single machine to managing the operations of an entire smart city.

3. Can a human have a digital twin?

The idea of a Human Digital Twin (HDT) is gaining momentum, but it is still a developing field. Unlike machines, the human body and mind are highly complex and unique, making it much harder to fully replicate in digital form. Current efforts focus on building partial twins – for example, digital models of the heart or brain by using data from medical records, wearables, and sensors to simulate health scenarios or personalize treatment.

Research from projects like the Human Digital Twin study and the EU’s Virtual Human Twins initiative shows promising directions, such as creating personal agents, digital simulations of communication, or tools for surgical planning. But these remain experimental. So, while the concept is advancing, a complete digital twin of a human is still a vision – not a reality.

Tiếng Việt

Tiếng Việt 日本語

日本語

RELATED NEWS

Digital Twin: The Decision Layer Modern Industry Can’t Ignore

Introduction Over the past decade, enterprise investment in data infrastructure – spanning IoT ecosystems, cloud platforms, and advanced operational technologies – has fundamentally transformed the industrial landscape. Today, manufacturing systems generate continuous streams of real-time data from sensors, machines, and production lines at a scale that was previously unimaginable. Yet...

Edge AI: Powering Real-Time Intelligence at the Edge

For decades, artificial intelligence lived in data centers, powerful, but distant. Every decision required a round trip to the cloud: data sent up, results sent back, action taken. That model worked well enough when speed was optional. Today, it often isn’t. Edge AI changes the equation by bringing intelligence directly...

Vietsol at SAE WCX 2026: Connecting with the U.S. Mobility System

SAE WCX 2026 brings together the global automotive engineering community, from OEMs and suppliers to technical leaders and mobility innovators shaping the future of the industry. This year, Vietsol will join the event and mark its presence at one of the most established gatherings in automotive engineering. Held in Detroit...